To Enter the “Ranks” or Not…

Published by: WCET | 9/1/2011

Tags: Data And Analytics, Rankings

Published by: WCET | 9/1/2011

Tags: Data And Analytics, Rankings

At the end of July, I moderated a webcast for WCET with Bob Morse and Eric Brooks from US News and World Report regarding their forthcoming ranking of online education. The purpose of the webcast was to answer the questions our members had raised – to help clarify the process of how US News plans to use the data they are collecting to determine the rankings. Unfortunately, the webcast left me staring at the same muddied water.

US News (USN) reiterated that they still have not developed the methodology for determining the rankings, as they reported in Inside Higher Ed in June. As they answered in the open questions after the webcast, “At this point there is not a methodology, as was explained on the webcast. In an ideal world, the prior year’s ranking methodology and ranking variables for online education could be included with the surveys, as is practiced with U.S. News Best Graduate and Best Colleges surveys. Unfortunately, due to the inaugural nature of these online surveys, no methodological plan can be established until U.S. News assesses the robustness of the submitted data. When there eventually is a plan it will be disclosed at the appropriate time. In future years, U.S. News hopes to be able to be more specific at an earlier stage of the survey process about its online degree ranking methodologies (at least what was used the previous year) and which data points were used in the rankings.” Frankly, this ‘groping in the dark’ methodology surprised me.

Who suffers here? The students. USN claims to empower students to make informed decisions. In reality USN is taking the power of choice away from the students. USN is encouraging students to concede to their opinion of what a quality program is rather than providing students with data to investigate the options that are important to the each student’s individual professional and personal goals. They will, based solely on the popularity of the publication, be funneling students to institutions and programs which may not be a proper match.

In a recent email conversation with Vicky Phillips, of Get Educated.com, she noted that, “US News accepts advertising on a pay per lead model. This means the company gets paid based on how successful those ads are as direct recruitment vehicles for the very schools they rank. The US Department of Education disallows paying college recruiters based on recruitment success because of the corruption in this model. If you’re a paid recruiter for a particular college, can you simultaneously operate a neutral rating agency for the same college? I’d argue not.” (It’s important to note here that GetEducated.com does its own rankings of online degrees.)

I have also had several schools, who wish to remain anonymous, express the struggle they face in making a decision to or not to respond to this survey. Many feel that without knowing the criteria for ranking, they are hesitant to answer questions. They have no way to provide context to their responses or the questions are not wholly appropriate for their population of students. Yet at the same time, they are concerned about how they’ll be perceived or portrayed if they don’t answer the survey. The majority of online students are what has typically been called “non-traditional” – they’re older, have jobs, families and community commitments and are motivated to go to college either for personal or career improvement. As such, many may be 5, 10, 20 or more years removed from having received a high school diploma. Yet, institutions are required to report high school rank, GPA and SAT scores of their students in this survey, though in the responses to open questions USN does note, “…questions about the high school ranks and SAT scores of online bachelor’s degree students are highly unlikely to play any role in ranking of online bachelor degree programs.” So why put institutions through the effort of reporting on indicators that will not be used?

As these rankings are touted as indicators of quality in online education I asked Ron Legon, Executive Director of the Quality Matters Program, about his opinion. He said, “Although the survey acknowledges that institutions may not track or be able to provide all the information requested (e.g., in distinguishing between online and on ground students in their programs), I’m not sure U.S. News fully appreciates how difficult it will be for most institutions to complete the instrument with meaningful information. For example, the huge disparity between the small numbers of first time freshmen in online programs and the predominantly older students, who typically have some prior higher ed background and, in most cases, are already employed, will distort many of the retention, graduation and employment statistics – to the point where they may be meaningless.”

To this point, one institution I have been in communication with, who responded to US News that they would not be participating in the survey, received this message:

“In order for an institution to opt out of receiving further communication regarding this year’s survey, U.S. News requires that a scanned letter or email be sent directly from the President, Provost, or academic dean referencing the decision to not participate and stating whether or not the institution offers the type(s) of online degree program(s) indicated in the survey. If your institution does offer this type of online degree program, please be advised that your institution may still be included in this year’s rankings and will still appear on the usnews.com website. When possible, U.S. News may gather other publicly-available data about your online programs.”

WHAT? So, even if an institution chooses not to provide the data, US News will still publish incomplete and potentially inaccurate data about their institution? That sounds like blackmail to me. “Answer our survey, or else!” And I can only imagine that by ‘publicly-available data’ they are referencing the IPEDS data reported on College Navigator, which (back to Ron’s point) would be pointless to report for most online bachelor’s degrees. The IPEDS graduation and retention rates only account for first-time, full-time freshman.

Because he said it so well, I’m going to leave the conclusion in the words of Ron, “We can hope that the data collected will profile the major structural varieties of online programs that are out there, and, to this extent, the U.S. News coverage may be helpful to those of us who are trying to track the phenomenal growth of distance learning. But I can sympathize with others who have expressed concerns about any premature attempts by U.S. News to rank programs, especially without any pre-announced weighting of criteria. U.S. News would be providing a service to students and the general public by simply publishing an inventory and an analysis of the characteristics of different types of online programs uncovered by their survey. This, in itself, would help students choose among programs. But, as a news organization trying to sell magazines, I expect that U.S. News will need some headlines and give in to the temptation to rank programs based on insufficient, unreliable data and ad hoc criteria.”

We’d like to hear from you. What are your thoughts? Is your institution participating? Fill out our poll and/or leave us a comment to join this conversation.[polldaddy poll=5468389]

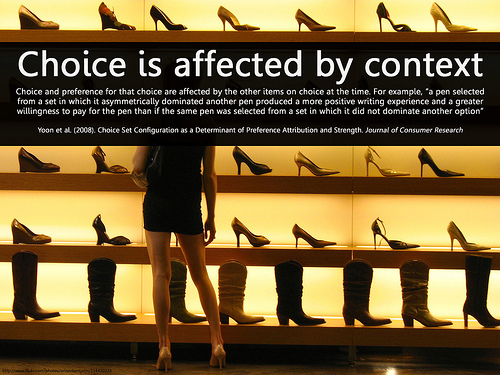

*Choice is affected by context by Will Lion (on Flickr). Text: Choice and preference for that choice are affected by the other items on choice at the time. For example, “a pen selected from a set in which it asymmetrically dominated another pen produced a more positive writing experience and a greater willingness to pay for the pen than if the same pen was selected from a set in which it did not dominate another option” Yoon et al. (2008). Choice Set Configuration as a Determinant of Preference Attribution and Strength. Journal of Consumer Research www.journals.uchicago.edu/doi/abs/10.1086/587630 Background image courtesy of: www.flickr.com/photos/orinrobertjohn/114430223. This citation appears in the bottom left of the image.

1 reply on “To Enter the “Ranks” or Not…”

My institution will most likely answer the survey at the request of our president who is proud of our accomplishments in the area of online education. I, however, do second the reservations of others because of the focused nature of online education – the adult student returning to complete an unfinished baccalaureate degree or to enhance their educational credentials in a chosen career through graduate education. Many of the data requests are not germain in our world and we know that adults return to school with a clear sense of purpose and focus. Additionally, while many attend from out of state (for specific graduate programs usually) the research has shown that the majority of online students live within a reasonable proximity of a campus but have chosen online because it fits into their busy work/family lives and obligations. This effort by USN smacks of commercial gain at the expense of well-meaning institutions serving a public that has clearly chosen online learning as a way to meet their goals.