A Human-Centered Approach to Generative AI in Accessibility Review

Published by: WCET | 3/12/2026

Published by: WCET | 3/12/2026

When reviewing a tool for accessibility, we ask whether it will work for students in real course use. In practice, that question usually runs through a Voluntary Product Accessibility Template (VPAT®). VPATs are widely available and a standard part of many review processes, but they are often highly technical and difficult to interpret without specialized training. Too often, teams spend time translating conformance language instead of completing the judgment work of interpreting what claims mean in context, identifying risks, and addressing findings. To navigate this tension, we focused on two questions: how could we add clarity to accessibility reviews, and where could generative AI add value without replacing human oversight?

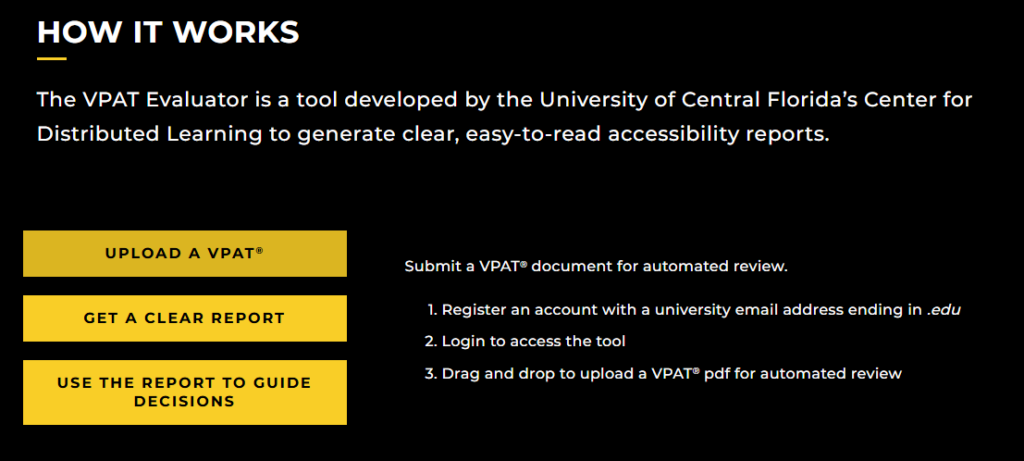

At the University of Central Florida (UCF), the Center for Distributed Learning (CDL) supports the adoption of educational technologies with a mission to make high-quality education available to anyone, anywhere, at any time. To clarify accessibility evaluation while preserving reviewer oversight, CDL developed the VPAT Evaluator, a generative AI tool that translates VPAT documentation into structured, narrative reports to support human judgment.

Reviewers upload a VPAT, and the tool generates a structured narrative that reframes technical documentation into plain-text descriptions of how a feature may function in the course environment. It surfaces claims, ratings, and explanations that indicate limitations, then explains how they may introduce barriers to student learning, such as when students cannot reach required materials, cannot complete activities, or cannot access key functions. The report organizes details in a consistent format so reviewers can more easily compare products and decide what to verify, which questions to ask vendors, and where to focus follow-up efforts. The tool accelerates interpretation, but verification and final decisions remain with reviewers, aligned with institutional policies.

Since its release in May 2025, the VPAT Evaluator has supported more than 1,200 requests by 300+ users across 200+ institutions. We share these numbers as a signal that many campuses face similar challenges: interpreting accessibility documentation at scale across the specialists available for interpretation.

Generative AI can perform an initial scan, turning technical documentation into clear narrative summaries. A faster first review helps teams move beyond basic interpretation and focus on deeper evaluation, vendor follow-up, and instructional planning.

AI performs best with structured workflows. During development, we iterated prompt strategies and output formats to align results with established accessibility standards as well as the needs of procurement and instructional technology teams. A consistent structure makes it easier to compare products and communicate findings across roles and levels of expertise.

AI-generated output is a starting point, not a final verdict. Reviewers verify the narrative, interpret claims in context, and determine what additional information is needed. Time saved on interpretation becomes time reinvested in judgment, validation, and planning for real course use.

Higher education faces increasing expectations to provide accessible digital learning environments. Generative AI offers a practical way to support these requirements while also streamlining the everyday work of technology evaluation. Our experience shows that when AI manages the first pass of complex documentation, reviewers have more time to focus on the nuanced decisions that shape student learning environments.

For institutions looking to integrate generative AI into operational practices, three key takeaways stand out:

Accessibility is everyone’s responsibility, and new tools make it easier for more people to contribute their expertise.

Authors: Rebecca McNulty & Ahmad Altaher Alfayad, Center for Distributed Learning, University of Central Florida

Instructional Designer, Center for Distributed Learning at the University of Central Florida

Applications Programmer, Center for Distributed Learning at the University of Central Florida