Count All Students! Outcome Measures for Community Colleges

Published by: WCET | 4/17/2018

Tags: Collaboration/Community, Completion, Distance Education, Enrollment, Managing Digital Learning, Outcomes, Research, Student Success, WCET, WICHE

Published by: WCET | 4/17/2018

Tags: Collaboration/Community, Completion, Distance Education, Enrollment, Managing Digital Learning, Outcomes, Research, Student Success, WCET, WICHE

Should we count all students when analyzing higher education, or only some of them? We think all students should be included….and community colleges are often misrepresented by not doing so. This third post in a series of posts on the IPEDS Outcome Measures data (released by the U.S. Department of Education late in 2017), we turn our attention to community colleges.

Should we count all students when analyzing higher education, or only some of them? We think all students should be included….and community colleges are often misrepresented by not doing so. This third post in a series of posts on the IPEDS Outcome Measures data (released by the U.S. Department of Education late in 2017), we turn our attention to community colleges.

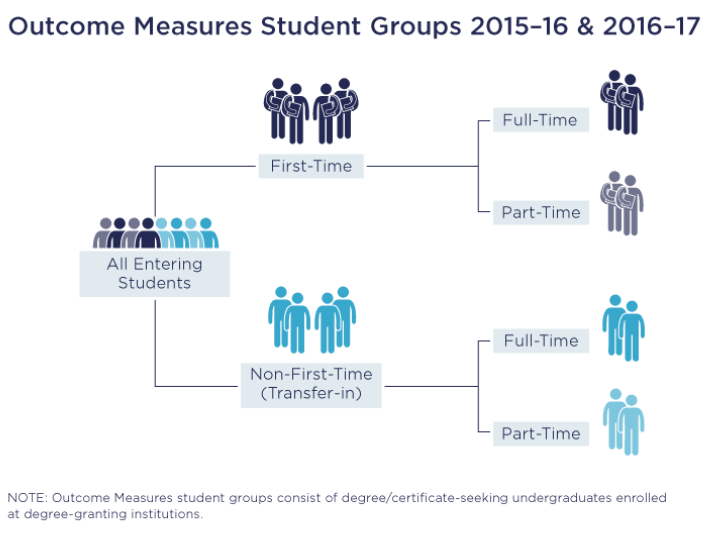

The Outcome Measures is a new, more comprehensive view of what happened to students who attend an institution. This new Measure improves on the traditional Graduation Rate, which only included first-time, full-time students and left out new part-time or transfer-in students. There are many of those students at two-year institutions.

The first post in our series introduced the issue generally and the second examined the Outcome Measures results for institutions that serve a large number of students who take all of their courses at a distance. This post samples the results for the community colleges in one state.

Some overall observations:

This is the first year for the data to be released. As with any new statistic, some institutions were more successful than others in gathering and reporting the data. This should improve in the coming years.

We will also provide you with our spreadsheet of data so that you can analyze the data and perform similar analyses for your institution.

The bottom line: Institutions should insist on broader use of the Outcome Measures data when reporting to legislators, state system offices, the press, and those pesky ratings services.

For the old Graduation Rate measure, institutions were asked to identify a “cohort” of students who entered as first-time (they’ve not attended college before) and full-time (they are taking a full load of courses) in the fall of a given academic year. Institutions track and report what happened to those students after a set number of years.

The new Outcome Measures asks institutions to expand this tracking by adding three additional cohort categories of students:

The first cohorts were compiled for the Fall 2008 academic term and the first dataset containing results was released last October. It is important to note that these data are for all students in a given cohort, not just distance education students.

WICHE’s Policy and Research Brief provided statewide and regional results for each of the western states. We wanted to see the impact on an institutional basis and gauge some variations among institutions in a similar setting.

Given that community college leaders long complained about Department of Education’s Graduation Rate not providing an accurate profile of their students, we thought that we should look at that sector. We chose community college in Colorado for several reasons:

First, let’s look at the input into the Outcome Measures statistic. Which students are included?

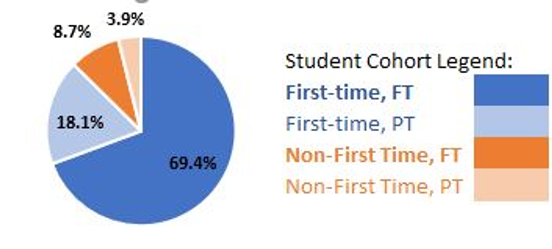

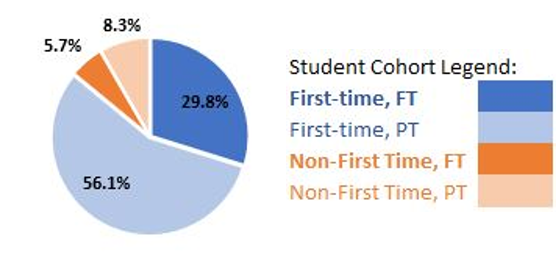

Community colleges may be the group best served by the expanding view of the Outcome Measures statistic. The Graduation Rate (previously the only measure of what happened to those admitted to an institution) includes only students who were first-time (they are new to higher education) and full-time (they enrolled in a full load of courses). Especially for urban institutions with a focus on working adults and those returning to college, this leaves out the bulk of the students they serve.

This fact is starkly highlighted in the WICHE brief on the western states in which they found that only 29% of all community college students in the western states were first-time, full-time students. That means that 71% of community college students in the west were likely not included in the Graduation Rate counts. In our opinions, that is a serious undercount.

For Colorado’s community colleges, the percentage of students who were first-time, full-time students is similar to the other western states at 31.2% of enrollments. Therefore, 68.8% of community colleges students in Colorado (almost 15,000) were likely not included in the Graduation Rate counts.

Looking at the numbers more closely, in Colorado there is a huge rural/urban divide in serving first-time, full-time students:

This is not surprising, as rural community colleges may tend to serve students in their immediate area. Their enrollments may reflect the desire of traditional age students to begin their college experience locally. It also could be a tribute to those colleges offering programs that are designed to lead to employment locally. On the other hand, urban institutions, due to the make-up of their local population, may recruit and serve more adult and returning students.

Why does this matter? If only the old Graduation Rate were used, the comparisons were not equal. The Graduation Rate includes a much smaller percentage of students for urban institutions and is a poor representation of the institution’s overall population and activities.

In addition to granting degrees and certificates, the mission of many community colleges includes preparing students to transfer to other institutions. The Outcome Measure now accounts for one of the basic goals of these institutions. The low completion rates of community colleges have been cited as evidence of their ineffectiveness. Admittedly, some colleges deserve the criticism. By including counts for the students who transferred and those still enrolled, a more complete picture of what happened to the students who entered is obtained.

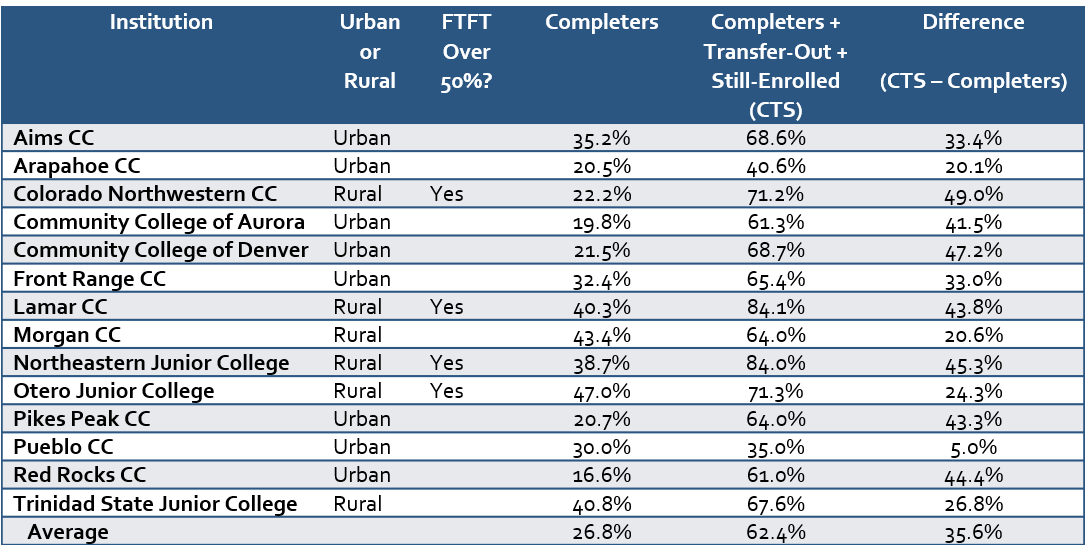

Below is a table of the results of the Outcome Measures data for students who entered each institution in 2008. The columns included are:

Our main observations from this table are:

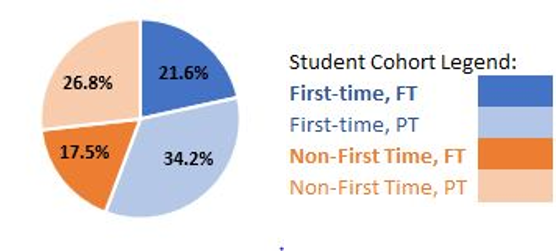

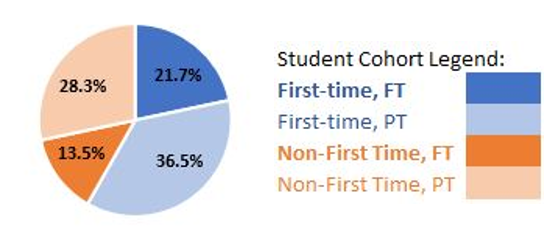

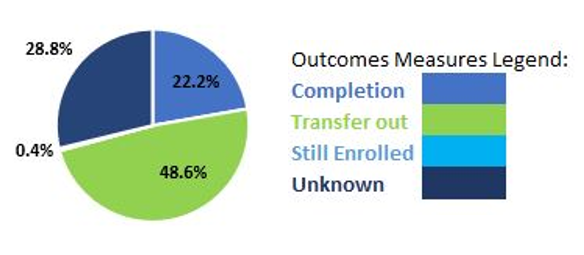

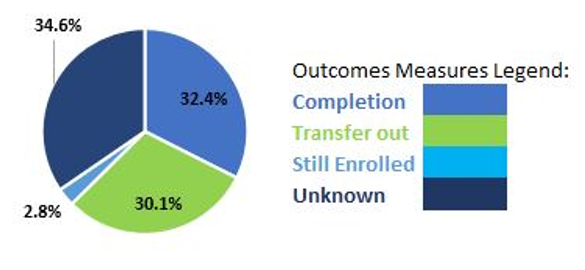

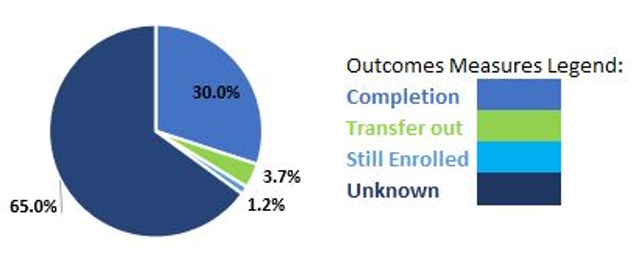

One of the benefits of Outcome Measures (OM) is to better understand the composition of the student cohorts at our institutions. While the IPEDS Graduation Rate includes only “first-time, full-time” students, Outcome Measures includes the four cohorts listed above. Let’s look at results for those institutions for each of the four cohorts…

Northeastern Junior College has the largest “first-time, full-time” entering cohort of students. The College is located in Sterling, CO, which has historically been a farming community. Located along the Interstate about 130 miles from Denver, it is closer to Wyoming and Nebraska than the Capitol city.

Morgan Community College has the largest “first-time, full-time” entering cohort of students. Located in Fort Morgan, Colorado, it is about 40 miles closer to Denver on the same interstate as Northeastern Junior College. It is interesting to note the differences in the entering student composition of these two rural institutions that are less than an hour from each other.

Arapahoe Community College has the largest “non-first-time, full-time” cohort of entering students. It is located in the southern suburbs of Denver. At 17.5%, the College has the highest percentage in this category, but the others are not far behind with most colleges enrolling between 8-16% o their students in this cohort.

It is not surprising that the Community College of Denver, in the state’s largest city, leads with the most returning students who are studying only part-time.

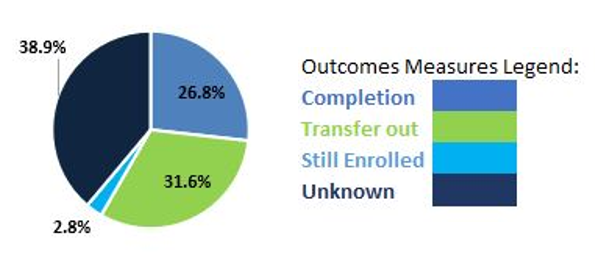

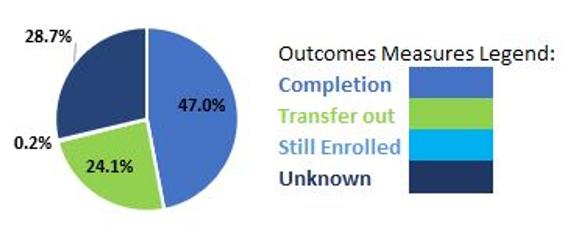

In addition to providing observations about the 2008 student cohort make up, the IPEDS Outcome Measures data also provides insights regarding the disposition of the students over time. Institutions report student outcomes in one of four categories:

The data reported here are at eight years since matriculation (as of Fall, 2016). In addition to eight-year data, IPEDS also collects and reports outcomes at six years. We compared the six and eight-year data and found little difference. For simplicity and consistency, we decided to use only the eight-year outcomes in this analysis. It is also noteworthy that 8 years beyond the matriculation date is four times the typical completion for an Associate degree.

The Outcome Measures data for Colorado’s community colleges are reported below:

Continuing the tour of the state to the rural southeast, Otero Junior College is located in La Junta and has the highest completion rate. Three other institutions broke the 40% completions mark: Morgan Community College (43.4%), Trinidad State Junior College (40.8%), and Lamar Community College (40.3%). All of them are located in rural settings.

Completers rates vary greatly and often need local context. For example, Red Rocks Community College (in a middle-class to upscale suburb west of Denver) has the lowest “completers” rate at 16.6%. Its “transfer-out” rate is 39.0%, reflected a possible suburban trend to start locally and transfer.

Colorado Northwestern Community College tops the list for “transfer out” colleges. It is located in remote Rangely, CO near the border with Wyoming. It is about 200 miles northwest of Denver on the other side of the mountains. On a good day, it is a four-hour drive. The lure to start locally and transfer may be based on geography. Other two-year institutions with high transfer rates include Community College of Denver 45.9% (an urban college) and Lamar Community College 43.1% (a rural college).

Although the percentages are small across the board, Front Range Community College has the highest rate of students still enrolled at the institution without having completed a degree or certificate after eight years. Front Range serves Boulder, Fort Collins, and the northern suburbs of Denver. Other community colleges with significant transfer rates reported include other suburban institutions: The Community College of Aurora (2.3%), and Red Rocks Community College (2.2%).

Pueblo Community College has the highest rate of “unknown” responses, which we suspect may be due to problems with gathering data for this statistic in its first year of existence. We suspect that because of the suspiciously low “transfer out” rate that they report, especially since Colorado State University-Pueblo nearby is only six miles away. Northeastern Junior College (16%) and Lamar Community College (15.9%) reported the lowest “unknown” rates.

We are still absorbing what this all means.

We definitely urge you to examine your institution’s Outcome Measures rates. Use our spreadsheet as a starting place. We should all demand that colleges, policymakers, the press, and online listing/ranking services use these data to better represent what might happen to a student who enrolls at your institution.

If we write more or have calls for action, we will let you know.

Meanwhile, what do you think? Let us know.

Russell Poulin

Director, Policy & Analysis

WCET – The WICHE Cooperative for Educational Technologies

rpoulin@wiche.edu | @russpoulin

Terri Taylor-Straut

Director of Actualization

ActionLeadershipGroup.com